AI Act: Political Agreement on the Digital Omnibus. What Changes for Businesses.

In the early hours of May 7, 2026, the EU Council and the European Parliament reached a provisional political agreement on Omnibus VII regarding the AI Act (Council Press Release 299/26). COREPER approval followed on May 15. Five key points that concretely reshape the operational framework for businesses.

The Context: Why a Political Agreement Now

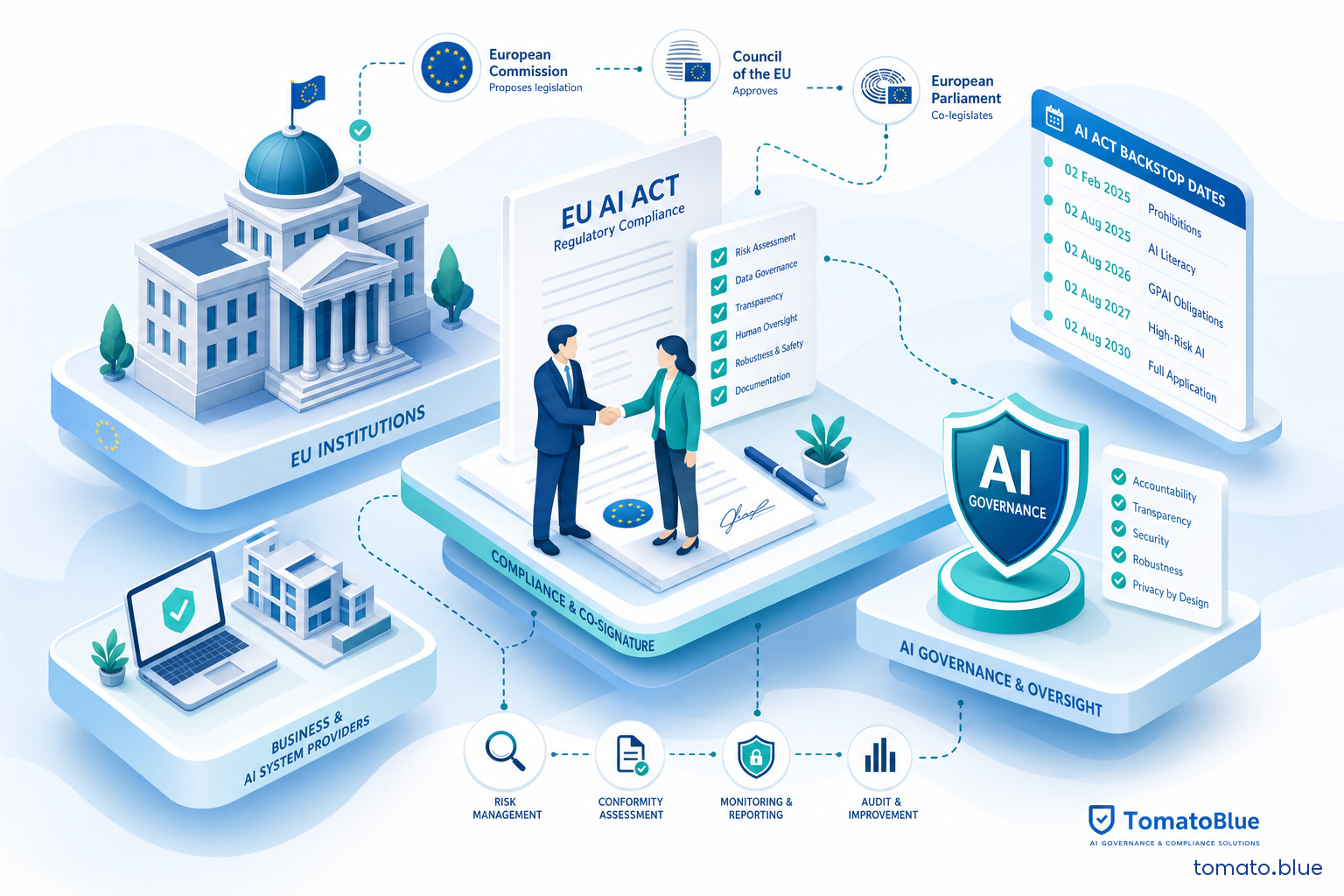

The AI Act has been in force since August 1, 2024. The first major operational deadline — the application of obligations for standalone high-risk AI systems (Annex III) — was set for August 2, 2026. With less than three months to that date, many organizations were navigating a compliance path built on incomplete harmonized standards, unconsolidated implementation tools, and widespread interpretive uncertainty.

The Digital Omnibus package, proposed by the Commission in November 2025, emerged from this pressure. The May 7 political agreement crystallizes its main provisions, anticipating formal adoption expected for June-July 2026 following the European Parliament plenary vote.

Important: until the plenary vote and formal Council adoption, the August 2, 2026 deadline remains legally binding de iure. The correct strategy is the dual-track approach: maintain the original roadmap as a fallback, calibrate compliance investments toward the new horizon.

1. Fixed Dates, No Longer Anchored to Standards

The most operationally significant change concerns the mechanism for triggering obligations for high-risk systems. The original AI Act tied entry into force to a set period after the publication of harmonized standards — a mechanism that created structural uncertainty, since standardization timelines depend on third-party bodies (CEN/CENELEC) beyond any company's control.

The political agreement replaces this mechanism with fixed backstop dates:

| Category | New Deadline |

|---|---|

| Standalone high-risk AI systems (Annex III) | December 2, 2027 |

| High-risk AI embedded in regulated products (Annex I) | August 2, 2028 |

Backstop dates are not open-ended postponements: they are firm deadlines, independent of standards progress. If harmonized standards arrive earlier, the rules apply earlier. The real gain is certainty of the maximum deadline, finally enabling credible roadmap planning.

2. Watermarking: Seven Months, Not a Paper Exercise

The obligation to machine-readably mark AI-generated synthetic content — set out in Art. 50(2) of the AI Act — was accompanied by a six-month grace period for adoption of technical standards. The political agreement shortens this to three months, with a deadline of December 2, 2026.

Machine-readable watermarking is not a formality. It requires integrating marking technologies (metadata watermarks, C2PA, cryptographic hashes) into content production systems, revising publishing workflows, and verifying signal chain of custody in downstream systems. For organizations producing synthetic content at scale — images, video, audio, text — this is a technical project with a seven-month horizon from today.

The agreement's implicit message is clear: the European legislator treats watermarking as a non-negotiable prerequisite for the information ecosystem, not one more item on a compliance checklist.

3. Explicit Prohibition of Nudifiers and CSAM

The agreement introduces a new prohibited practice under Art. 5: AI generation of non-consensual sexual or intimate content and child sexual abuse material (CSAM). This goes beyond the Commission's original proposal, which did not explicitly address this category.

The operational relevance for businesses is twofold. First, providers of generative models operating in Europe must verify that their systems cannot be used to produce such content — implying upstream filtering and control obligations. Second, distribution platforms that carry AI-generated content must implement detection and removal mechanisms compliant with the new prohibition, coordinated with existing DSA obligations.

For SMEs not operating in these domains, the point is relevant for due diligence on AI tool suppliers.

4. Art. 6(4) Registration Reinstated

During the legislative process, an interpretive tension had emerged over the EU database registration obligation for systems that providers consider exempt from the high-risk classification. The Council had proposed eliminating this obligation during the trilogue with Parliament; the May 7 agreement reinstates it.

In practice: if a provider develops or places on the market a system falling within Annex III categories but considers it not to present significant risk under Art. 6(4), it must still register it in the European AI Office database, stating the grounds for exemption. The registration obligation thus becomes a transparency and accountability mechanism, not a formal administrative burden.

Organizations that have already classified their AI systems need to verify: all systems falling within Annex III, including those classified as non-high-risk, must be entered in the register.

5. Bias Detection: Strict Necessity Standard under Art. 4a

Art. 4a of the AI Act, introduced during trilogue, provided for the possibility of processing special categories of personal data (sensitive data under GDPR Art. 9: racial and ethnic origin, health data, sexual orientation, etc.) for the purpose of detecting and correcting bias in AI systems.

The political agreement reinstates the strict necessity standard for this processing, aligning with EDPB/EDPS Joint Opinion 1/2026. In practice, processing of sensitive data for bias detection is only permitted if strictly necessary for the purpose and not substitutable with less sensitive data. Organizations that have implemented — or are designing — fairness testing programs for their AI models must verify that the legal basis and proportionality of the processing meet this enhanced standard.

What to Do Now: The Dual-Track Strategy

The deadline extension is real, but it is not a pause. It is the time to build well what would have been built poorly in August 2026 — under pressure, without definitive standards, with resources devoted to contingency rather than quality.

The recommended operational strategy for organizations handling high-risk AI systems is the dual track:

- Track 1 — Fallback: keep the original August 2, 2026-oriented roadmap active. Do not dismantle compliance programs already underway. Work already completed (system classification, gap analysis, technical documentation) retains permanent value and accelerates conformity against the new deadline.

- Track 2 — Optimization: recalibrate investments toward the 2027-2028 horizon. The additional time allows waiting for final harmonized standards, building more mature AI governance systems, and integrating AI compliance into the product lifecycle rather than treating it as a standalone obligation.

Deadlines that have not changed — watermarking by December 2, 2026, GPAI model obligations, absolute prohibitions — require immediate attention. The calendar is not uniform, and planning as if everything had been postponed would be a mistake.

Institutional Next Steps

The May 7 agreement is political, not legally binding. The formal process requires:

- European Parliament plenary vote (expected June 2026)

- Formal Council adoption

- Publication in the EU Official Journal and entry into force

Until formal adoption, August 2, 2026 remains the only legally binding deadline. Organizations must keep this in mind in internal communications and with stakeholders: stating that "the AI Act has been postponed" would be technically inaccurate and operationally risky.

How does this impact your AI Act compliance program?

Tomato Blue helps SMEs and businesses classify AI systems, run gap analyses, and build roadmaps aligned with the new deadlines. Contact us for a no-commitment conversation.

Contact Us